- December 28, 2023

- By Pablo Diego Rosell

- Artificial Intelligence, Bayesian Statistics, Social Science, Survey Methods

Introduction

Historically, the social sciences have been dominated by the development of theories. However, as noted by scholars like Watts (2017) and Kelley (1927), this has led to a clutter of competing theories, giving rise to an ‘incoherency problem.’ This situation is compounded by measurement challenges like the ‘jingle-jangle fallacy,’ where different terms are used for the same concept, or the same term is applied to different concepts, causing confusion and inconsistency in understanding and application. The incoherency problem hinders the practical application of research and blurs the clarity of scientific understanding. We summarize here a recent article by Pablo Diego-Rosell for The Gallup Organization, in the context of the DARPA-ASIST program, presenting an innovative solution to this issue: Descriptive to Executable Simulation Modeling (DESIM), a tool leveraging expert crowdsourcing for model validation.

The DARPA – ASIST program

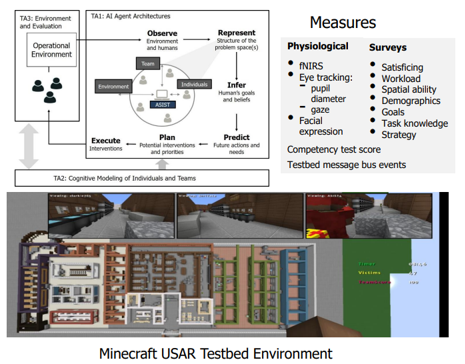

We recently completed a 4-year project for the Artificial Social Intelligence for Successful Teams (ASIST) DARPA program. The goal of ASIST was to develop AI theory & systems that demonstrate machine social skills needed to infer the goals and beliefs of human partners, predict what they will need, and offer context aware interventions in order to act as adaptable and resilient AI teammates.

The ASIST program used a variety of urban search and rescue (USAR) and bomb-defusing scenarios in a custom-built Minecraft testbed. The complexity of these missions, exacerbated by the unpredictability of victim conditions and distribution, makes optimal planning crucial yet challenging.

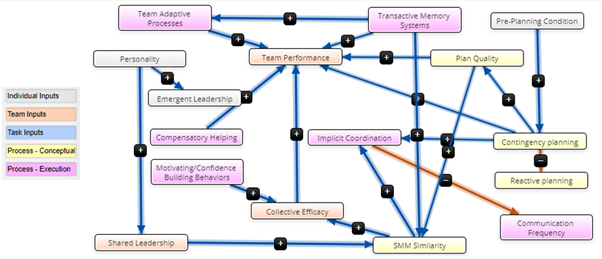

The Gallup-ROSAN team developed a Team Planning Model encapsulating multiple layers of team dynamics, from individual skills to team-level communication and leadership, that would enable the AI teammate to better infer, predict and intervene towards improved team effectiveness.

Descriptive to Executable Simulation Modelling (DESIM)

To validate and refine this model, Gallup employed an expert crowdsourcing approach, reaching out to a multi-disciplinary group of experts in the fields of AI and team effectiveness. This method aimed to gather both qualitative and quantitative feedback, which would then be used to develop Bayesian priors for testing each model prediction. The qualitative feedback targeted the operational aspects of the model, such as variable definitions and hypothesis formulation, while the quantitative feedback focused on generating weight distributions for these variables.

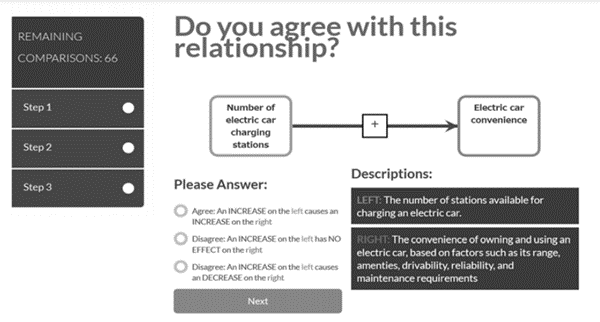

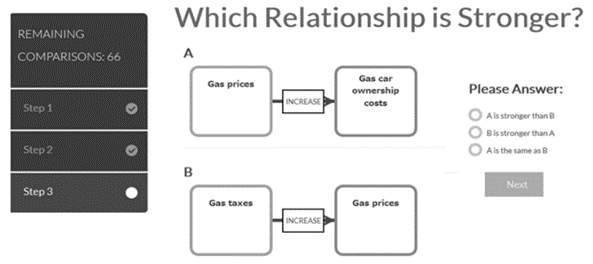

The DESIM methodology involves four steps: formulating the descriptive causal model as a fuzzy cognitive map (FCM), deconstructing it into pairwise comparisons, crowdsourcing these comparisons, and finally, computing edge weights to make the model computational. This process not only validates the model but also quantifies the strength of causal relationships, minimizing ambiguity and aligning the model with established standards in literature and practice.

For each hypothesis (edge) in the FCM, an agreement question is asked to assess overall support for the proposed hypothesis. Then pairwise comparisons are extracted comparing the relative strength of different predictions to estimate edge weights (Note that captions below are from the original paper by Pfaff et al., 2015, and do not correspond with our FCM).

Captured from Pfaff, Drury & Klein (2015)

Expert Recruitment

The Gallup-ROSAN team then proceeded to recruit experts in the team effectiveness and AI disciplines by targeting ASIST program performers, including 262 researchers representing leading AI research institutions in the US: Carnegie Mellon University, Arizona State University, University of Arizona, University of Central Florida, University of Southern California, Cornell University, Rutgers University, Charles River Associates, Dynamic Object Language Labs (DOLL), Institute for Human & Machine Cognition (IHMC), and Aptima. We also reached out to Gallup’s own research and development division and published researchers in the field of team effectiveness, based on a search of the peer-reviewed literature on Elsevier’s Scopus database.

Results

The Analytic Hierarchy Process converted the experts’ pairwise comparisons into scores, providing a measure of model validity. The response rate was modest, at 4.0%, but the feedback was rich and informative. The analysis of edge weights, derived from the survey, revealed varying degrees of consensus among the experts. Some predictions, like the effect of Transactive Memory Systems on Shared Mental Model Similarity, received strong support, while others, like the role of Implicit Coordination in reducing Communication Frequency, were more contentious.

The qualitative feedback provided valuable insights into the model and its operationalization. Experts pointed out potential ambiguities and offered suggestions for improvement. For instance, some respondents questioned the clarity of certain relationships and the inclusion of specific variables. This feedback highlighted the need for clarity and comprehensiveness in model construction.

For a detailed description of results, please check the full paper here.

Conclusions and Implications

The DESIM exercise allowed us to collect thorough quantitative and qualitative expert feedback of our causal model of team effectiveness. Qualitative feedback from experts regarding the operationalization of the model (variable definitions, proposed hypotheses), and the design of the DESIM survey itself led to valuable insights that will improve future efforts. Additionally the quantitative feedback allowed us to generate weight distributions to serve as informative Bayesian priors to test each prediction.

About Rosan International

ROSAN is a technology company specialized in the development of Data Science and Artificial Intelligence solutions with the aim of solving the most challenging global projects. Contact us to discover how we can help you gain valuable insights from your data and optimize your processes.